One important concept in the modern web application is backend communication, Backend communication simply refers to how several components of the backend, frontend applications and different backend applications communicate with each other.

One of the most popular patterns for backend communications is the Request-Response Model. In this article, I will discuss how the Request-Response Model works on a high level, how the request is structured and the challenges it poses.

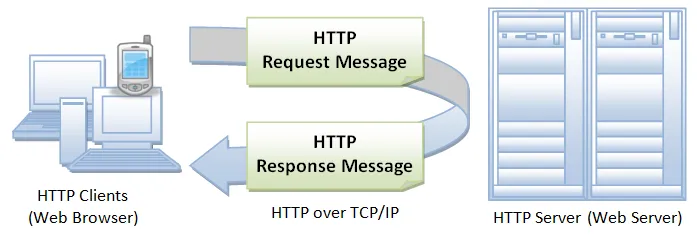

The request-response model is a classic pattern and very common, if you have built a backend application you probably used this model. It involves the client(frontend applications or some other backend applications) sending an HTTP request to the server. Then the server parses the request, After parsing the request it processes the request, performs the necessary operations, and sends a response back to the client. Finally, the client parses and consumes the response.

Anatomy of the Request/Response Model

Before a request is sent, the server has a pre-defined structure that the client has to adhere to, the request structure of the request includes the type of request i.e GET, POST or PUT and the data payload which includes the data that the client wants to send to the server. The request has a boundary which is a sequence of characters that marks the end of one HTTP request and the beginning of another.

On the other hand, the Response structure includes a status code, which tells you whether the request was successful. It also contains the data that the server sends back to the client. Like the request, the response has a boundary defined by a protocol that ensures the message is correctly formatted and can be parsed by the client.

Building a Video Upload Service with Request-Response Model (High level)

There are two different approaches you can take when building a video upload service using the request-response model: sending a large request with the video or chunking the video and sending a request per chunk.

Sending a large request with the video is quite simple and straightforward, where the client can easily send the video to the server, but the issue with this approach is that it can be slow and unstable when dealing with large files, especially when using slower networks.

Chunking the video and sending a request per chunk is much better and it’s a reliable approach. This approach involves splitting the video into smaller chunks, sending each chunk as a separate request, and allowing the server to process each chunk before sending the next. This approach enables the client to resume the upload from where it left off in case of network errors.

Challenges of the Request-Response Model

The Request-Response model is quite popular and very effective model for backend communication but it has limitations. particularly in scenarios that require real-time communication, such as notification services or chatting applications. These applications require a different communication model that enables real-time communication and handles long-running requests.

Another challenge of the Request-Response Model is dealing with very long requests. When a request is too long, it can cause performance issues and result in a slower response time. In such cases, it may be necessary to use alternative methods such as chunking the data.

Conclusion

The Request-Response Model is a popular and effective model for backend communication. It allows the client and server to communicate easily, with the server processing the request and sending a response back to the client. However, it has its limitations, particularly in scenarios that require real-time communication or handle very long requests. As a developer, it's essential to understand the pros and cons of different communication models and choose the one that best suits the application's needs.

References: